The main focus of my residency was to experiment with multi-channel audio treatments with the goal of adding them to the performance environment that I regularly perform with, Metamorphic_Gestures. I have been working on this series of patches for several years, and have actually done a few multi-channel performances, usually with an 8 channel sound system. Unfortunately, It always feels very rushed and somehow I inevitably loose the time and attention to really focus on the issues of spatialization and instead just end up moving point sources around the various speakers. I have worked on multi-channel pieces in the studio before, and wanted to try and incorporate some of the more complex gestures and textures into the live setting.

The first thing I tested out was the individual panning of overlapping grains in a granular process. I created this subpatch:

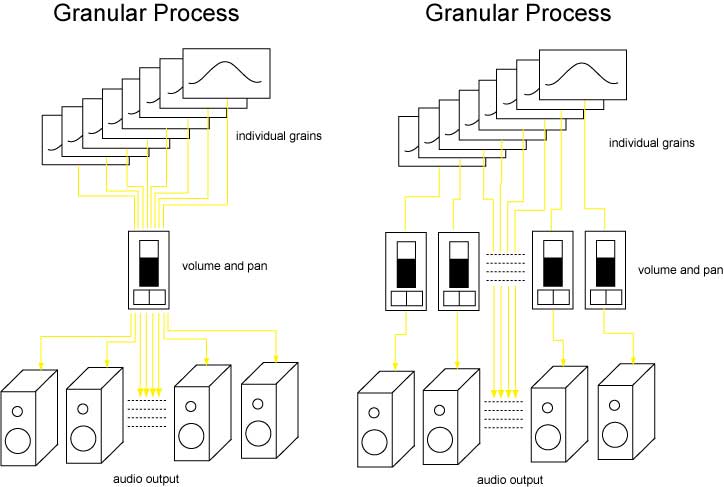

It gives you an x-y graphical display to control of both angle and distance for each of the 8 subgrains (as opposed to using the panner to move the mix of the 8 grains). Here is a schematic of the signal flow:

(Actually, I just realized that this schematic is a little incorrect – the volume and pan on each grain at the right should actually be connected to all 8 of the output channels – too many lines to draw though…). I also added in a function to automatically trigger a move to a new position over a given interpolation time.

The results were pretty amazing (I’m sure this has all been done before, but for me it was a very new experience). All of a sudden the sound became more dynamic and somehow alive. Also, changing the size of the grain had an interesting effect which is lost in the stereo reduction. This got me thinking about what other types of audio treatments could benefit from this type of lower-level multi-channel routing.

The next thing I tried was a series of filtering patches I use, which basically create chord clusters of up to 32 resonant filters each tuned to a specific frequency. In the past the output of each filter has been added together and then spatialized. I had a similar reaction when I started to individually pan the filters in the clusters. It is exactly the same audio signal, but when it is diffused this way it achieves a very different and enveloping psychoacoustic effect. With these processes there is an element of uncertainty about which notes/frequences will be excited by a given impulse. When you include the uncertainty of where that sound will then come from it adds another dimension to the process. Very fun.

For two days in the second week I was fortunate to be joined by UK-based composer and performer Lawrence Williams. We had discussed working on a series of improvisations using contact microphones attached to his saxophone and various other instruments he uses (dictaphone, melodica, tuner/metronome, various bottles, etc).

We had some very interesting results, many of which used some of the new spatialization patches I had been working on. He was also very interested in working with feedback inside of the sax, which he can control by pressing or releasing keys effectively changing the size of the space that is feeding back. I will post a few excerpts from our experiments here (http://www.konradkaczmarek.com/steim).

In all it was a very successful residency, and I found the working environment there well suited for this kind creative experimentation. I look forward to using this new arsenal of spatialization tools in my upcoming performances. Thanks again!